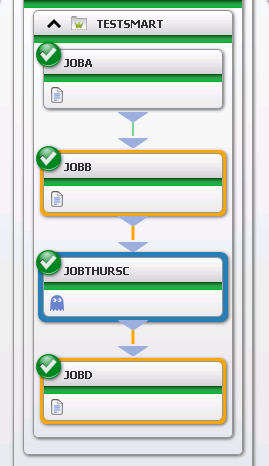

Recently I was working with a customer who had a number of IBM Datastage jobs that all had set of standard schedules and varying unique schedules. These all needed to be dependent on each other. When trying to solution this, I ran into an issue trying to incorporate all of the job flows into one big flow. This was because all of the unique schedule criteria made things difficult. For example we had easy jobs that ran on M-F, but we needed to incorporate the jobs with the unique job schedules into the jobs with the easy schedules. Lets say that JOBA, JOBB and JOBD are the M-F jobs. JOBTHURSC is only scheduled to run on Thursday. Without using the “CTM_FOLDER_ADJUST_DUMMY” parameter the jobs would order M-F but the JOBTHURSC would not be present except on Thursdays. This will cause you issues everyday except for Thursday because JOBB would finish and not see JOBTHURSC and would cause JOBD never to execute because it would be looking for JOBTHURSC.

To solve for this I came across the “CTM_FOLDER_ADJUST_DUMMY” parameter. By adding the parameter into my environment ,this allowed me to solve for my Thursday job always being in the flow as a DUMMY JOB but only running when it was not its day to run. You will then have to create a Smart Folder for your jobs. The parameter tells the Smart Folder to always order every job in the Smart Folder according to the scheduling criteria of the Smart Folder. If a job’s individual schedule is not that particular order date then set it to run as a “DUMMY” job. If you look closely at the job flow screenshot,you notice the purple ghost symbol. (I think it is the bad guys from Pac-Man.) This is the indicator that the job is set to dummy. When I tested this flow out it was a Monday, so all the jobs ordered in the Smart Folder because in this case the Smart Folder’s schedule is set to EVERYDAY. The Thursday job ordered even though it was Monday but it ran as a DUMMY JOB. So the DUMMY JOB in this case called JOBTHURSC becomes a placeholder to keep the flow inline without having any gaps because it is not Thursday. This solution very happy. The customer’s current setup within Datastage Director did not account for this and caused them to have to run some jobs manually at particular times to accommodate for their needs. Meaning somebody from that team might be getting up at 5am to kick off things in certain time windows. Hopefully this solution keeps allows the customer to focus more on their other Datastage tasks and leave the scheduling to me and Control-M.

Listed below are the instructions for Version 8 from the Knowledgebase.

1. Log in to the Control-M Configuration Manager.

2. Right click on the Control-M/Server that this table resides on and choose system parameters.

3. Find the parameter named “CTM_FOLDER_ADJUST_DUMMY” and change the value to Y.

4. Restart the Control-M/Server

5. Go back to the planning environment and create a Smart Folder for the jobs you need to solution this for.

6. In the Smartfolder properties select the pre-requisites tab. Within there will be an option called Adjust Condition. Set this to YES.

Last night I had the very annoying happening when I was building some test jobs in Control-M. The test jobs were pointed to an agent where the jobs would be executing the scripts directly on the Control-m agent. I ordered the jobs into the Monitoring environment and let them to run in the order that I created them in their workflow. Job 1 was only supposed to run for 1 minute. It ended up running for 5 minutes. I immediately knew something was wrong. When I attempted to read job 1’s output I was unable to retrieve this from the job. I also tried to kill the job. This action was rejected by Control-M. I started to panic because I had never seen this before. I had job 1 set to run all day so I changed it to stop running in one minute. It stopped running and failed. I extended the time a few minutes and tried to re-run it again. It resulted in the same issue as before. This time I killed the services on the agent and rebooted it. This sent the job into a unknown state. That didn’t help.

I then decided to go to the agent that was to execute this job and went to the following path \Program Files\BMC Software\Control-M Agent\Default\PROCLOG\. In the PROCLOG folder I started looking the logs that started with AM_SUBMIT*. And look for logs that were created around the same time my job was created. I also searched within the AM_SUBMIT* log with my the job ID given to the job when it was ordered into the Control-M Monitoring environment. (The job ID is in the Synopsis area for the job) This reassured me I was in the correct log. I scanned for errors and found the following ,“CreateProcessAsUser failed with error: 1314 – A required privilege is not held by the client”, and immediately went to the BMC website and went to the Support Central area. I then started looking through the Knowledge Articles for this errors. Luckily I wasn’t the only Control-M admin to run across this issue.

To resolve this problem, you need to increase the rights of the job owner, adding the “Replace a process level token” permission. To do so, please follow the following instructions:

1) Open the Control Panel / Administrative Tools / Local Security Policy

2) Add to the job owner user account the “Replace a process level token” right. (You may have to logout or even reboot to have this change take effect.)

If another user account other than the “Local system account” is defined under the “log on” tab of the Control-M/Agent service, then the account should be member of the local admin group and have the following rights:

Local Security Settings:

> Adjust memory quotas for a process

> Replace a process level token

> Log on as a service

AND

List Folder Contents permissions for the agent drive

I was fortunate because all I needed to was add the “Replace a process level token” right for my control-m account that was executing the jobs on that server. Then I rebooted. After I did this I immediately re-ran my job and it worked properly and returned a output. I proceeded to execute the other test jobs and they all worked correctly. Problem solved.

First proceed to your Configuration Manager and right click on the Control-M Cloud module and go to Connection Profile Management. Then click the + for a new profile. Give your profile a name then click the dropdown to the VMware selection.

Now in the account creation page you will notice several fields that you need to fill in. This information will be provided by your VMware VCenter application owner. The fields include the Web Services Server Host Name, Web Services Server Port, Service Path. These fields will build out the Server URL that the Control-M for Cloud module/agent it will use to communicate with VCenter. Also you can use the check marks to turn SSL on or off, use the default listening port for VCenter, and to use the Default Service Path. If you need to use SSL reach out to BMC Support for assistance for how to import the SSL configuration file into the agent server the Control-M for Cloud module resides on. Also there is information related to importing the SSL configuration file on the BMC website. If you aren’t required to use SSL just do not check “Use SSL”. The last three fields will include the Username and password that Control-M for Cloud will use to access VCenter.

In my shop we used an existing control-m system account and password. The VCenter admin gave it the appropriate rights and access to be used in VCenter. In some cases the VMware admin can limit which three categories (Power Tasks, Snapshot Tasks, Configuration Tasks) of Control-M for Cloud the system account will have rights to execute. Also another thing I wanted to point out is in the beginning of our testing phase the admin only granted me rights to execute Power Tasks. As things start moving along the system account will eventually have rights to execute Snapshot operations as well. This is fine because it all depends on what your IT shop needs you to accomplish for them. At this time our shop just needs to automate reboots at odd hours. Eventually we hope to be involved with executing snapshots as well.

Once you have all the information you need click test and if everything authenticates without issue you will see a popup windows with a green check mark letting you know the connection is good. If you do not get this green check mark then you will have to continue to work with your VMware admin to work through the connection issues. Just remember it’s going to be something simple such as firewall issues, port issues, or you just fat fingered something in the account information field. So keep it simple when troubleshooting your Control-M for Cloud account setup issues. If you are dealing with SSL you might run into some issues such as incorrectly import the SSL file by making a mistake in your import script or your VMware admin might have give you the wrong SSL file to import. I recommend if you get stuck just turn your logging on and pipe out the proc logs and open a ticket with BMC Support to help you narrow down your issues. I recommend doing this anytime you get stuck with any type of setup for a Control-M module. BMC Support will help you get through any type of issue.

Recently I was working on documentation for my production environment. I was clicking through some of the folders and looking at jobs. I came across a few jobs that were decommissioned a few months back. I went to delete these out of the folder in planning section. In the process of highlighting multiple jobs, I accidentally deleted one of my production jobs in the process. This led me to learn how to recover a deleted job(s) from my planning production environment. In fact I had a good colleague that had the same incident happen to him and he had to work magic in his Control-M database to recover his jobs. Hope these directions below can make it simple for those who have made the same mistake as I have.

When discovering you deleted a production job. Go to the planning selection and then click on the Tools icon. Then click on Versions. After clicking on the Versions icon click on the correct Control-M you are using then select the folder that the job was deleted from. Then use a previous days date before the jobs were deleted. Then click on the Deleted Items from the Change Type: menu. After this select Restore in the bottom right.

Notice the job has the word Deleted in the Change Type field. Make sure this is the job you were missing and then click restore. This will bring a the job back in alone in a folder that was named the folder as it was in before it was deleted. Make sure you copy the job once it is restored. Get rid of the folder that it restored in then go back to the folder it was missing from and paste it back into the folder where it was originally Then check this change back in and now you will have your job back.

I recently attended a first time Control-M User Group conference in the Atlanta, Georgia area. It was organized by a fellow Georgian and my favorite BMC rep. He did an outstanding job of putting on the event. It had a very nice turnout and some of the BMC technical staff showed up as well. Even better, it’s also nice to know that there are other fellow Control-M folks close by in my area. We discussed the latest Workload Automation Change Tool and a product called Control-M for Hadoop. We also covered some basics of Control-M Version 8 for a lot of the audience member’s whose environments were still running previous versions of Control-M. Of course we had good food and SWAG too!

This first meeting has set a good foundation to possibly make an official User Group at the next event. I think the frequency of these meetings should be quarterly. This will give us plenty of time to arrange to be out of the office and keep the event from getting watered down. As things move forward I would really like to see our User Group stay consistent. We need to keep these events organized and open for good discussions. In the future our members do not need to be afraid to ask questions, share horror stories, share awesome stories and those Aha moments! Every time we attend these events we need to remember to make our rounds and introduce ourselves to others. Maybe we could arrange games to allow all attendees to introduce themselves. Maybe a little Control-M trivia would be cool? Such as what was the Hadoop product named after? Also these User Group Events need to have a lot of SWAG from BMC. I mean we had pretty good swag at this User Group meeting but I think we should have the good stuff every time. Also lets make sure we always have good food.(The food was good at this one) I am just saying this group will not be allowed to do pizza. Our User Group needs to be able to listen to it’s members and provide answers that they think might help. Also if technical BMC Control-M experts are in attendance we can leverage with them on Control-M topics as well. These are just a few of my ideas. Looking forward to hearing more ideas from others! Regardless of what is decided, the end result should be I can’t wait to try that in the office tomorrow!

I recently added Control-M for Cloud module to my Control-M Version 8 environment. It is a very easy install just like any other Control-M module. I remember hearing about it in 2013 at the Control-M Briefing conference in Houston at BMC Software headquarters. During the Control-M for Cloud presentation I was very impressed with the information the presenter provided for all of the Control-M professionals with in the audience. The presenter touched on how Control-M for Cloud can spin up servers using Amazon Elastic Compute Cloud web services (Amazon EC2), how it works with VMWare VCenter to automate all of the types of tasks in that application from reboot, snapshots and migrations, and also how it works with BMC’s product Bladelogic which does server automation related tasks. At the time of the presentation, I knew that I wouldn’t be using much of Control-M for Cloud but I did see some possible potential for this module in the future. Upon arriving home from the briefing I made sure to pass the information about Control-M for Cloud to one of my IT shop’s senior system engineers.

Now flash forward from February 2013 to March 2014. The same Senior who I shared the Control-M information with happened to email my manager about Control-M for Cloud and it’s capabilities. We had a customer who realized that they was having issues with their application crashing when it’s Linux servers were not rebooted at least once a week. The issue was they weren’t very well versed in Linux to navigate around and to do reboots. So to solve this we decided to use Control-M for Cloud to automate the reboot task and send them a confirmation via email that the reboot took place for them instead of having our Linux expert write a script to do this or have the Linux expert handle the reboots every Sunday at 5:00am.

The next step involved me gathering more information on the product. I used the PDFs off of the website and also reached out to colleagues. I even looked at Webinars and Youtube videos that BMC pushed out for its followers to reference for help. After reading a good bit on the product, I went on the the BMC website signed in with my account and went to the Product and Downloads and Patches area on BMC’s website and found what I was looking for. Then used the Electronic Product Distribution option to get the correct version and platform of Control-M for Cloud and then I had the zip file for the install sent to me via email all by using EPD. Once I had the zipfile on my desktop, I copied it on to the Control-m agent server, where I intended the Control-M for Cloud module to run. I extracted the zip file info and then once it finished extracting went on to click on the setup file that was populated from the zipfile extraction. This brought up an BMC install window that you walk through during the install. All you really need to know is the name of the Control-M agent server you are installing Ctrlm for Cloud on and the host server where the Enterprise Manager is located. It’s just clicking next several times and then it will begin to download. Once it completes click finish. Then you will need to go log into your host or main Control-M apication server where the enterprise manager is located and run some commands from the command line. Once these commands are successfully executed your install of Control-M for Cloud is completed. (And these commands are what you run after any Control-M module install.)

Here are the commands you need to use to complete your module install in parenthesis below.

(After completion of your Control-M module go to the main application server. Sign in as the Admin Control-M account. Log on to CMD prompt and type in “ctmgetcm”. Then enter Which is the agent server name you just installed the AFT module on to. Press “Enter”. Enter : [*]: Enter “*” then press “Enter”. Enter : [View]: Enter “Get” then press “Enter”. )

Alright now once this is completed your Control-M for cloud installation is officially completed.

On the next blog I will show you how to define a Control-M for Cloud VMWare Account.

Hey Control-m users and followers. I would like to discuss how to handle control-m system accounts on Windows servers that have unexpected or planned domain changes on Windows servers that are a part of jobs in your distributed production control-m job environment.

Recently in my environment we have had a number of domain changes which have caused a number of my jobs to fail. This primarily being because the changes were not communicated all the way across the board.

To solve this issue for Control-m I had to troubleshoot a few of the problematic jobs. This led me to realize what the issue was. Our traditional domain name was slowly being phased out on a few servers at a time. It was making the jobs fail because the traditional domain control-m system account was not of any use in the new domain. I knew I had a new domain control-m system account created just did not have to use it yet. To fix this I placed a request to the Active Directory admin to add the new domain control-m system account to the servers involved with my failed jobs that had the domain change, and also to give it read, write and delete rights. Once the admin completed my request, I also added the new system account in the actual job itself where it says runas. Then I re-ran all my jobs that failed because of this reason and they began working again.

To be proactive I plan on adding the the new domain control-m system account to all of the servers my jobs touch that still have the traditional domain name. That way as they’re migrated to the new domain the domain system account will be on the server. That way access and rights will not be effected and none of my customers will be impacted.

In March 2012 I was brought in as an intern at my company to shadow the ways of IT and also be a part time Control-m scheduler. As soon I started in the IT arena I knew that I was going go be constantly drinking knowledge from a fire hose. To help me handle this I began by something I do well which is networking! The first thing I did was created this blog and started posting things that I came across. I guess you could call this blog a journal of my Control-m progress. This led to a good amount of hits and also some comments from other Control-m experts. Another thing I did was I began building all kinds of jobs in our Control-m development environment. This served as good practice and helped me really dive in and learn certain functions of Control-m. I also did a lot of googlling related to anything on Control-m I could find. I did find things but a lot of the stuff I found didn’t apply to my environment or were subjects well beyond my skillset. It was very frustrating because Control-m is such a small specialty it’s hard to find a lot of good information online. Eventually I stumbled upon a site that was run by Control-m subject matter expert that dedicated to his time with Control-m. I actually reached out to the Control-m expert by emailing the contact information on his site, just to start up some Control-m conversation. Well it turned out he loves helping folks in the Control-m community. This led to a lot of resourceful emails and phone calls. Eventually I was able to get this guy on to do some Control-m training and other types of work for my IT shop. From our constant Control-m dealings I now consider this guy and friend and Control-m colleague. I also developed a relationship with one of BMC’s control-m experts and salesmen. He was always good to check on me and answer all my questions. And he even advised my manager at the time to upgrade our Control-m environment from version 7 to version 8. His recommendation was approved and then next thing you know, I was tasked to do the migration. Around this time I also began to work with BMC Support with a lot of issues I ran across. This was also a good resource for me in certain situations. Another thing that came along in Feb. 2013 BMC Software invited me to one of their annual Control-m Briefing conferences in Houston. Going into this I thought this would be just a boring quick turn around trip. I was wrong about that. It turned out to be a good time and led me to more Control-m knowledge and even more subject matter experts that I was able to network with. In fact one of the contacts I met I still reach out today for any Control-m questions and sometimes shoot him a text to see how he is doing. He happens to be a really talented Control-m Administrator and scheduler. In fact he was pretty much born to be a Control-m expert because his mother is a career Control-m scheduler as well. I just think that is really neat side note. Another thing that I did to increase my networking opportunities was created a Linkedin account. This has served as a very useful tool and has put me in contact with a lot of good IT connections and other folks in business as well.

By building up a strong network of Control-m colleagues and constantly building things in developmentI catapulted me from being an intern in March 2012 to owning Control-m at my shop by December of 2012. It doesn’t hurt to network and be persistent no matter what you’re trying to accomplish.

It’s been a slow process getting jobs into production the last two months. Even though this is fact I still think I have had a lot of good wins.

I am improving with making batch files but finding job owners and identifying the purpose of jobs has been very challenging. I am starting to pile up newly tested scripts that are in need of a nice owner and and a detailed run book. It’s so funny to me that looking back I was struggling with simple tasks. Now those tasks are a walk in the park but now I am held up by searching for job owners and then waiting for run book information to be sent to me via email. In fact when I get impatient I have interviewed a few job owners over the phone to make sure I get the information I need. Well more like a run book interview. I am persistently hunting these folks down and doing my due diligence. It will pay off but I am learning to be patient during this process.

Also during this process or migration whatever this is, I have became acquainted with scripting up batch files. I have re engineered older long winded scripts and simplified them using batch.(embedded scripts) Some of these I have done on my own and others I have had the help my friend and heck of a Workload Automation expert Robert Stinnett. http://www.robertstinnett.com/control-m-resources/ I am slowly building a foundation in basic scripting and can now read advanced scripts very well. This is going to come in handy as I move forward.

One thing I am still trying to perfect is setting variables in my scripts using the Control-M parameter option. Some of the basic functions such as setting date stamps in formats such as ddmmyyyym yymmdd, yyyy, mmddyyy and other formats are very easy for me now. I still seem to have to go back and check my work and test when I substring variables within the parameter setup in Control-M. I usually get it but always have to check it a few extra times. It’s fun to play with but I would like to be confident the first time I sub string something out.

I have also made major use of the documentation link that is reachable by clicking on a job in the Control-M environment. You can access your documentation via Web link or to a file path. It worked better for me to use a web link and set it up that way. I also took the time to set up a standardized template then saved as a PDF document that way my run books are standard and very easy to read. I have received a few kudos for this setup. Run books are very tedious and annoying to setup but very necessary. Also the cool thing is when a particular job has issues the folks monitoring the jobs can use the documentation link and it takes the user straight to the PDF for that particular job. It then loads the PDF right in front of their eyes for them to follow the instructions. This has been my most simple task this whole project but it is something a lot of pride in! People love run books but nobody wants to do them. I promise you I will be doing them for a long time!

I have done three slide deck presentations on Control-M in the last two months and I am about to do my fourth one this Friday. I am looking forward to this but very nervous about presenting at the same time. This presentation will make or brake my Control-M career at my shop. No pressure right? Oh yeah, by the way as a Workload Automation Expert at your IT shop you also have to be able to sell your product internally to other departments! If I sell this right I will be the Control-M King at my shop. No pressure right?

So things are looking good. Jobs are slowly but surely being migrated. I am starting to be able to sale the product like a Control-M salesmen minus the sharp shirt and tie. I am getting versed and comfortable in scripting. I am making sure every job has a good run book. I am implementing a good setup model for my Control-M environment. Learning new things and most importantly having fun doing all of this!

Have you ever wondered if you could upgrade your older Control-M environment yourself? I think that a lot of Control-M customers and users don’t realize that BMC Software gives you the tools you need to do this new popular upgrade to Version 8 yourself. As a matter of fact I have just done an upgrade on my own. Here is my approach. It’s practical, safe and most of all cost efficient for you!

First of all the Development environment is my sanctuary and my canvas to IT perfection. So here we go. First you can do one of two things. You can download the Control-M Version 8 Upgrade Guide from the BMC website or you can reach out to the BMC Support Team and ask them for the requirements needed for your Version 8 Development environment via email or phone call. (Actually only if you have an existing Customer Support Account will you have access to such material or to the Support Team. Ask your BMC Account Rep to get you started.) So after you know the requirements needed for your servers and database. Pass these requirements on to the people in your organization that can make this new Control-M Development environment come to life. Put a request in and have your Server team set up a Development environment. Then put a request to have your Database Group/Team or whatever your organization calls them and have them throw an SQL Server Agent on one of the Servers (I don’t know how Postgres Databases work if that’s what you are currently using talk to BMC Support) that you requested to have built. It really is this simple do get started. I can’t guarantee that your team members from these other groups will be able to get your environment and database created in a timely manner but I can assure you are on to a good start to your first Control-M Version 8 Upgrade. I am basically saying hurry up and wait. Ok so once their work is completed, folks you have the green light to get officially started.

Let me introduce you to a new friend mine named the Amigo Ticket System. Open a ticket and request an Amigo Ticket with BMC Support. If you aren’t sure how to open a ticket on the website just call the Support number and the Support person will create and escalate your ticket for you. And somebody will call you. The callback time depends on the severity of the case. A development would be a level 3. So I would give it two hours at the most. If longer you they are just busy helping a lot of customers. The Amigo ticket allows you to talk to BMC Support and understand and go over Upgrade Guides to familiarize yourself with the whole upgrade process. Once you have an understanding of everything contact support again in regards to your Amigo Ticket number and let them know you understand the process. If you still aren’t sure ask them again. That’s their job and they take pleasure in helping you handle any issue. That’s why they call it the Amigo Ticket. So you asked again to clarify a few things maybe even talk in circles. It’s all fine. Now you know what is involved. Now you need to plan out how you want to go about starting this process. They can help you with this but since they know this is a new Control-M Development environment there is not as much planning involved from yourself or your BMC Support representative. Largely because there is no downtime issues when doing an upgrade in Development. Which is fine your here to learn and prepare for the real challenge of learning the new version 8 Interface and preparing to upgrade your production environment.

Next steps are to go to the Downloads and Patches section under the BMC Support area. Go in and select the components that you currently have in your older Control-M version. The Configuration Manager in your older environment will tell you what modules you need to download. Also I prefer to select file type that is ISO. To me this was the best route to go. There are many file options to use. Use the one that is easiest for you and your environment. Select the FTP manager once you have selected all of the components you know you need. Then you will receive an FTP link email. Take this link and paste into each browser on each server. Then access the link and drop the ISO files that are needed on each server. Also another side note is if your environment is virtual you need to have your Server Admin mount the ISO files for you. If it is not virtual just take the ISO files and have them burned to a disc. I had mine virtually loaded in the DVD drive on each Development Server. Do not try to mount on database server. The main Workload Automation V8 install will setup the database for you. Make sure you work with your database folks to get you the proper access and rights to that database server. I launched the Control-m 8 install file and just followed the steps. (Make sure you take notes of what you name your Ctrlm server and your Emserver. And make sure you get the username and password to your database from the DBA who set everything up for you.) Take notes or screenshot all of the usernames and passwords you create during this process. There will be occasions that rise in the future when these might come in handy. These will definitely be needed to use the migration toolkit from your older version to Version 8. I prefer screenshots. So continue through the step by step process and your install is complete for the Workload Automation Install. Now just download all of the Control-m Modules that you want on each agent. You can download every module on every agent. Or you can only download certain modules for certain agents. It all depends on what your strategy for your design.

The rest of the work is defining all of your components in the Configuration Manager. This can be done by reading the Control-M Administrator Guide for Version 8 or by Webex with Control-M Support.

This whole process really is that simple. And this post is just to get you familiarized with the process. And help you ask good questions when you contact BMC Support to let them know you are ready to Upgrade to Control-M Version 8. Make sure you just read the material given to you during the Amigo Ticket Process and ask every question you can while you have BMC Support on the phone. It’s really not that hard.

Helpful Tips:

1. Make sure each server for the new environment has the proper requirements that BMC designates.

2. Be the Administrator of each Server.

3. Turn off UAC.

4. Do not delete your old environment until you have completed a toolkit migration of the old date and you are 100% you are ready for Version 8 environment.

5. You should do this install in Development and enjoy the process and take lots of notes.

6. Have your team create the same number of servers involved as in your older Control-M environment.